OCR (Optical Character Recognition) converts scanned documents and images into machine-readable text. Generative AI (GenAI) uses large language models to understand, reason about, and structure that text into validated outputs. In health insurance claims processing, OCR is the digitization layer and GenAI is the intelligence layer. Neither works optimally without the other. Together, they form the foundation of modern AI document processing for health insurance.

Why Digitization Alone Is Not Enough

A hybrid OCR and GenAI pipeline deployed at Fullerton Health across nine APAC markets achieved a 300x speed improvement over manual processing, reaching 95% document classification accuracy and 87% field-level extraction accuracy at under two seconds per claim, according to a January 2025 paper published on arXiv. That result was not possible with OCR alone. It required OCR and GenAI working in sequence.

For APAC third-party administrators processing health insurance claims, the stakes are high. Documents arrive as handwritten prescriptions in Devanagari, multi-column hospital bills in Bahasa Indonesia, faxed discharge summaries in Traditional Chinese, and degraded photocopies of pharmacy receipts. Generic OCR GenAI health insurance claims pipelines built for Western markets simply do not hold up against this level of variability.

OCR converts a scanned claim document into text, but it cannot interpret context, recover from character errors, or handle regional-language handwriting at production scale. GenAI fills that gap. APAC TPAs need both technologies working in sequence, not as alternatives.

“OCR digitizes a claim. GenAI understands it. APAC TPAs that treat these as the same technology are solving half the problem.”

What OCR Does (and Where It Breaks Down)

OCR reads text from images by detecting character shapes pixel by pixel. It works well on clean, printed, single-language documents with consistent layouts. It fails on handwritten scripts, degraded scans, multi-column hospital bills, and the mixed-language content that defines everyday health claims across APAC.

How OCR Works: The Pixel-to-Text Pipeline

A standard OCR engine segments an image into regions, detects character boundaries, matches shapes against a trained character set, and outputs a string of text. This process is fast and deterministic. For structured printed forms with clear fonts and standard layouts, it remains the most cost-efficient option available.

The problem is that most health claim documents in APAC are not structured printed forms with clear fonts. They are photographs of handwritten prescriptions, photocopies of multi-page hospital invoices, and fax scans of discharge summaries, often in languages that most OCR engines were not trained to recognize reliably.

The Four Failure Modes in APAC Health Claims

Research from Sarvam AI (2025) confirms that handwritten Devanagari, where cursive joining, half-consonants, and diacritics vary dramatically between writers, produces character error rates above 30% even on clean scans, using tools like Tesseract trained predominantly on printed Latin text.

Multi-column hospital bills in Indonesia present a second failure mode. Merged cells, inconsistent column widths, and numeric fields that run across merged rows break standard zonal OCR approaches that rely on predictable field positions.

Bahasa Indonesia pharmacy receipts compound the problem further. Drug abbreviations, dose notations, and frequency codes are not standardized across pharmacy chains, and OCR cannot infer what a truncated or misspelled entry was intended to mean.

Low-resolution fax and mobile-scan submissions are the fourth failure mode. Image quality degrades through each generation of copying, and standard OCR accuracy drops sharply below 150 DPI, a threshold regularly missed by documents sent via fax or photographed under poor lighting.

What Generative AI Adds: From Text to Structured Intelligence

GenAI adds contextual understanding, field inference from partial data, anomaly detection, and multilingual normalization on top of OCR output. It can infer a missing ICD-10 code from surrounding clinical context, map regional drug names to their generic equivalents, and flag a claim amount that conflicts with a line-item breakdown, all in a single pass.

“A generative AI model reading a handwritten Hindi prescription does not just recognize characters. It resolves clinical abbreviations, maps them to ICD-10 codes, and flags inconsistencies in the same pass.”

Contextual Field Inference

When an OCR engine produces garbled text from a handwritten field, a downstream LLM can use the surrounding context to recover the intended value. A doctor writing “Amox 500 BD x 10d” may produce a string of mixed characters through OCR, but the LLM understands from context that this is Amoxicillin 500mg, twice daily, for 10 days. That inference maps directly to an ICD-10 code, a claim line item, and a reimbursable amount.

The LLM-AIx research paper (PMC, 2024) demonstrated this recovery capability directly: the LLM correctly identified field values that the OCR had misread, such as “N1” rendered as “N:” and Roman numeral “I/IV” rendered as “1/9” from low-quality scans.

Anomaly Detection and Fraud Flags

GenAI layers can compare extracted claim data against policy terms, historical billing patterns, and clinical logic in a single prompt. A claim where the prescribed medication does not match the listed diagnosis, or where the amount billed conflicts with the itemized procedure list, gets flagged before it reaches the adjudicator. According to a 2024 ACFE study cited by Writer.com, 83% of companies are expected to use generative AI for fraud detection by 2026.

Multilingual Normalization

GenAI models trained on diverse language corpora normalize across scripts within a single prompt. A field that reads “madu meh” (a colloquial Indonesian shorthand for diabetes) maps to its correct ICD-10 code. A Chinese-language discharge summary and a Thai-language prescription in the same claim packet are processed together without requiring separate language pipelines.

The Comparison: OCR Alone vs GenAI Alone vs OCR+GenAI Pipeline

OCR alone digitizes but cannot interpret. GenAI alone hallucinates without grounded text input. The OCR+GenAI pipeline combines accurate text extraction with intelligent reasoning, achieving production-grade accuracy on complex APAC health claims.

| Approach | Key Strength | Best Used When | Key Limitation |

|---|---|---|---|

| OCR Alone | Fast, cost-efficient, deterministic output | Clean printed, single-language, standard-layout documents | Fails on handwriting, degraded scans, multilingual or unstructured content |

| GenAI Alone | Contextual reasoning, flexible prompting, multilingual by design | Structured digital text inputs where source text accuracy is guaranteed | Hallucinates without grounded text; expensive at volume; cannot read raw images directly |

| OCR + GenAI Pipeline | Accurate extraction combined with intelligent reasoning and rule-based validation | Complex APAC health claims: handwritten prescriptions, multi-language bills, mixed-format submissions | Higher infrastructure complexity; requires prompt engineering and a validation layer |

BCG (2025) frames this well: GenAI “in combination with other advanced technologies” can make claims processing more accurate, but it requires the ML tools and structured pipelines already in place. That is precisely the OCR+GenAI architecture described here.

Sample JSON Output: What the Difference Looks Like in Practice

The output gap between an OCR-only approach and an OCR+GenAI pipeline is visible in the JSON. An OCR-only pass on a handwritten Hindi prescription returns a garbled string of characters mixed with Unicode escape sequences. An OCR+GenAI pipeline returns a fully validated claim object with normalized field names, an ICD-10 code, reimbursable amount, and a confidence score.

The right-hand output is what an adjudication system can consume directly via a generative AI claims processing API. The left-hand output requires a human to interpret it before any system can act on it. At scale across millions of claims annually, that difference defines operational viability.

APAC-Specific Challenges That Make This Pipeline Non-Optional

APAC health claims involve handwritten regional scripts, mixed-language documents, and variable scan quality at a scale that makes any single-technology approach unworkable. Fullerton Health processes tens of millions of claims annually across nine APAC markets, each with distinct document formats, languages, and quality profiles.

The IDP market in Asia Pacific is projected to grow at a 36% CAGR through 2033, driven by expanding technology infrastructure and the need for automated document handling across diverse regulatory environments. The growth is real, but so is the complexity: BFSI accounts for 32.7% of IDP market share because the documents are genuinely hard.

Handwritten Hindi prescriptions require Devanagari-trained OCR before the LLM layer can operate on reliable text. Multi-column Indonesian hospital bills require layout-aware parsing that standard OCR cannot perform. Thai-English mixed receipts and Chinese-language discharge summaries require multilingual models that understand clinical vocabulary in both scripts simultaneously.

KPMG research cited in insurance AI analysis (Vega IT, 2025) shows that insurers such as Zurich Australia already use OCR, NLP, and machine learning together to streamline document ingestion and reduce manual handoffs. The OCR-alone era is ending. The question for APAC TPAs is whether their pipeline catches up before their competitors.

The InterPixels Pipeline: OCR to LLM Reasoning to Validation

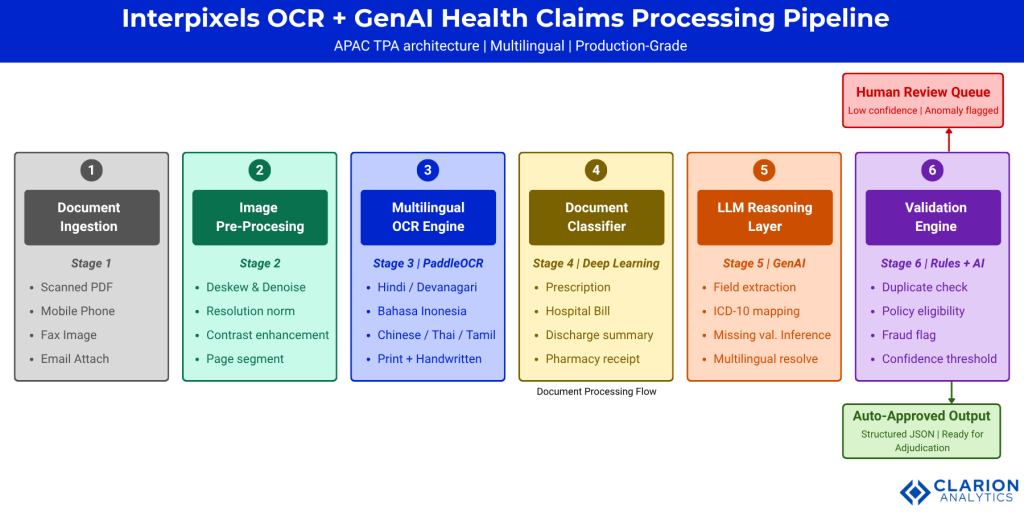

The InterPixels pipeline runs four operational stages: multilingual OCR extraction, deep learning document classification, LLM reasoning and field normalization, and a rule-based validation layer that flags anomalies before any claim reaches the adjudicator.

“Teams building this pipeline typically find that the validation layer, not the LLM itself, is the component that determines production readiness. Without it, GenAI confidence scores mean nothing operationally.”

Stage 1: Document Ingestion and Pre-Processing

Every incoming document, whether a mobile photograph, fax scan, or email attachment, enters a pre-processing stage that deskews the image, denoises it, normalizes resolution to a minimum threshold, and segments pages. This stage determines the quality of input the OCR engine receives. Poor pre-processing at Stage 1 propagates errors through every downstream stage.

Stage 2: Multilingual OCR

The PaddleOCR engine (60,000+ GitHub stars, 2025) supports 100+ languages, including Devanagari (Hindi), Bahasa Indonesia, Simplified and Traditional Chinese, Tamil, Thai, and Arabic. Its PP-OCRv5 model handles multilingual mixed documents in a single pass, and its PP-StructureV3 module converts complex multi-column layouts into structured Markdown and JSON that downstream models can consume. This is the primary reason PaddleOCR has become the standard OCR layer in APAC document pipelines.

Stage 3: Deep Learning Document Classification

Before the LLM reasoning layer runs, a document classifier determines whether the incoming document is a prescription, hospital bill, discharge summary, or pharmacy receipt. This classification step prevents the LLM from being prompted with incorrect field schemas. The Fullerton Health system described in the arXiv paper achieved 95%+ document type classification accuracy using a lightweight Logistic Regression classifier trained on their specific APAC document corpus.

Stage 4: LLM Reasoning and Field Normalization

The LLM receives the OCR text plus the document type classification and extracts structured fields: patient ID, diagnosis, ICD-10 code, medications with dosage and duration, claim amount, and provider details. It resolves ambiguities using clinical context, maps regional drug names and abbreviations to standardized equivalents, and applies schema-level type validation before outputting a JSON object.

IBM Research’s Docling toolkit (37,000+ GitHub stars, 2025) demonstrates this architecture at the infrastructure level: converting unstructured scanned documents into structured JSON and Markdown that LLMs can consume reliably. In practice, teams building this pipeline find that prompt engineering for the LLM layer requires domain-specific clinical vocabulary and APAC-specific abbreviation lists to achieve consistent accuracy above 85%.

Stage 5: Validation and Output Routing

The final stage applies deterministic rules: duplicate claim detection, policy eligibility checks, amount plausibility checks against treatment type, and ICD-10 code validity verification. Claims that pass all checks are auto-approved and output as structured JSON to the adjudication system. Claims that fail any check, or fall below the confidence threshold, route to the human review queue with a flag explaining the failure reason.

Figure 1: The InterPixels pipeline separates digitization (OCR), classification (deep learning), reasoning (LLM), and validation (rules engine) into discrete, auditable stages. Each component can be updated independently. GenAI outputs pass through a deterministic validation check before adjudication. Teams in regulated APAC markets can log each stage separately for compliance purposes.

Frequently Asked Questions: OCR and GenAI for Health Insurance Claims

What is the difference between OCR and GenAI in insurance claims processing?

OCR converts scanned document images into machine-readable text by detecting character shapes. GenAI uses large language models to understand that text, infer missing values, normalize multilingual content, and output a structured JSON claim object. OCR digitizes; GenAI interprets. Both are required in a production health claims pipeline.

Can OCR handle handwritten prescriptions in Hindi or Bahasa Indonesia?

Standard OCR engines produce character error rates above 30% on handwritten Devanagari, making the output clinically unusable. Specialized multilingual OCR engines such as PaddleOCR significantly reduce this error rate, but still require a downstream GenAI layer to resolve ambiguities, map abbreviations, and produce a validated structured output.

Why do APAC TPAs need both OCR and GenAI rather than just one?

GenAI alone cannot read raw document images. OCR alone cannot interpret context, resolve multilingual abbreviations, or infer missing fields. The OCR+GenAI pipeline combines the pixel-level text extraction of OCR with the contextual reasoning of LLMs. Research across APAC markets shows this hybrid approach achieves 87-95% accuracy at under two seconds per document.

How accurate is a combined OCR and GenAI pipeline for health claims?

The Fullerton Health system (arXiv, January 2025), operating across nine APAC markets, achieved 95%+ document type classification accuracy and 87% field-level extraction accuracy at under two seconds per document. A separate JARET 2024 study found a 60% reduction in processing time and 12% accuracy improvement over traditional RPA-only systems.

What does a GenAI claims processing API output look like compared to OCR-only output?

An OCR-only pass on a handwritten Hindi prescription returns a raw string with character errors and Unicode fragments. An OCR+GenAI API output returns a validated JSON object with patient ID, ICD-10 diagnosis code, medication name and dosage, duration, claim amount, confidence score, and an anomaly flag. The latter is immediately consumable by an adjudication system without human correction.

Three Principles for Getting This Right

First, OCR and GenAI are complementary, not competing. The OCR layer provides the grounded text that prevents LLM hallucination. The GenAI layer provides the reasoning that makes OCR output actionable. Choosing between them is not the decision; sequencing them correctly is.

Second, the APAC document diversity problem is not a niche edge case. McKinsey (2025) estimates GenAI could generate between $50 billion and $70 billion in additional insurance revenue, with the strongest impact in document-heavy processes. The APAC TPA market sits precisely in that impact zone, and the document variability of the region makes a robust pipeline the prerequisite for capturing that value.

Third, the validation layer is the operational differentiator. Teams that deploy OCR and GenAI without a deterministic validation stage find that confidence scores are not a substitute for rule-based checks. A GenAI model that is 94% confident in an incorrect ICD-10 code is still incorrect. The validation layer catches that before the adjudicator does.

The question worth sitting with: if your current claims pipeline cannot process a handwritten Hindi prescription and output a validated JSON claim object in under two seconds, what is that latency costing you per million claims?

Table of Content